Playing Catchup

I seem to have got out of the blogging habit, so I'm hoping to catch up on a few posts now. I'll tweak the dates so they're relevent to the events roughly as they happened (chronology? what's that?!)

The first event I'd like to make a post about was the excellent -

Web Standards Group Meeting on Javascript

Some of us shy away from JavaScript (until recently, myself included) on the grounds that it's not accessible. But these days, if it's done right, it can be positively beneficial to accessibility.

Demystifying Screen Readers - Steve Faulkner

Steve is very knowledgable on screen readers and all their foibles, and is Director of the Web Accessibility Tools Consortium. This talk mainly centred around Jaws (65%) and Window Eyes (35%). The bracketed figures are from a US National Federation of the Blind market share survey - it's obvious these are the two big players.

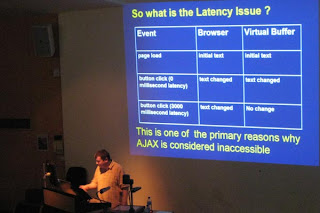

The key issues revolve around:

- Dynamic updates - user initiated and independent

Can the user access the updated content?

Is the user aware that the content has been updated? - Rich Internet Applications (RIA)

Can the user understand the role of the control?

Can the user successfully interact with the control?

Is the user able to access information about the current state of the control?

- Browse Mode (virtual buffer) - the user can navigate page content via paragraphs, headings, links, lists etc. They can also activate links and some form controls. But text characters can't be input into form fields, or interact with select elements in this mode.

- Forms Mode (browse mode off) - the user may only navigate through a document to focusable elements via the TAB key. Text access is limited to "read all" functionality. Most of advanced content navigation is unavailable.

[Steve Faulkner and the Latency Issue]

[Steve Faulkner and the Latency Issue]Latency is a problem because the virtual buffer does not update and the user doesn't know anything has changed. However, JAWS v7.1 started "listening" for virtual buffer updates in response to things like:

- window.setInverval()

- object.innerText (for IE)

- object.textContent and object.appendChild (in Firefox)

- changes in form control values

- And other stuff like ALT or TITLE attribute value changes.

- Do not code to accommodate the poor support shown by JAWS and Window Eyes.

- Use unobtrusive methods where available and appropriate, to help screen readers along.

- Don't use the excuse that JavaScript / Ajax is not accessible for screen readers to not bother to design for accessibility.

- Start developing interface elements that use WAI-ARIA specs, which will provide some benefits now and many more in the future.

[Christain's been on the beanz again]

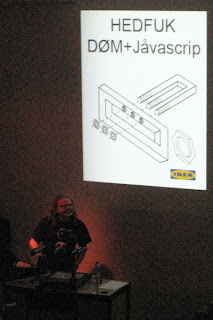

[Christain's been on the beanz again]His notes are available for download from his blog, so I won't repeat them verbatim. Needless to say, it was a fun presentation and contained the obligatory photo of a kitten ;-). Meanwhile, he's thinking of this as the title of his next book:

[Christian's Next Book?]

[Christian's Next Book?]PubStandards XVIII

Of course, the next item on the social agenda was the PubStandards gathering. Lots of fun and revelry as usual, here's one photo, but you can see more on Flickr.

[Patrick & Ashe go head-to-head, while Ross butts in the middle]

[Patrick & Ashe go head-to-head, while Ross butts in the middle]